Revulytics sponsors a series of Product Management Today webinars featuring innovative ideas from top software product management thought leaders. In these blog posts, we ask the presenters to share their insights – we encourage you to watch the full on-demand webinars for even more details.

Tristan Kromer , Lean Coach, Kromatic, presented Interpreting your Qualitative & Quantitative Data through Storyboarding. He showed how storyboarding helps you integrate qualitative and quantitative perspectives into a coherent picture of how your user’s experience impacts your business model, and how your product’s desirability connects to the viability of your business.

, Lean Coach, Kromatic, presented Interpreting your Qualitative & Quantitative Data through Storyboarding. He showed how storyboarding helps you integrate qualitative and quantitative perspectives into a coherent picture of how your user’s experience impacts your business model, and how your product’s desirability connects to the viability of your business.

How do qualitative and quantitative data fit together?

Each should inform the other, helping you refine the data you collect and the decisions you make.

Quantitative data typically tells what is happening. Are people using the product? Are they paying $9.99 or $99.99? Qualitative data answers fuzzier “why” questions: Why are they paying $9.99, and would they pay more? There’s often no exact answer, and typically sample sizes are lower, but the data is richer.

If you come from an analytics or engineering background, you might say that qualitative data is only for design folks – you can just launch an A/B test and figure out what’s really going on. But we need both. We need to use quantitative data and integrate multiple sources of information, but we also need to talk to our customers and listen to their stories.

For example, let’s consider how someone might locate food deserts – geographic areas where people can’t physically access sufficient nutrition. You wouldn’t be able to find all the deserts simply by talking to people; you would need to collect a data mashup that combined public transit data, socioeconomic data, and maps of supermarket locations.

But that alone wouldn’t be enough. It might appear that a supermarket serves a certain community, but you don’t notice a barrier – such as a highway with no overpass – that separates some of the community from the store. You might miss this detail unless you asked residents if they were aware of supermarket locations and could easily access them. Conversations that offered qualitative details such as this might influence you to incorporate additional data. In this case, you might include Google Maps walking directions in your analysis.

Apply this comprehensive approach to your product. Quantitative data offers an overview, but then you need rigorous customer interviews to understand what additional data you should capture. You might discover that only 10% of your customers use a certain feature, but you have to talk to them to learn why. Is it because they don’t see it? Or because they don’t want it? Or perhaps they don’t know how to use it? The core reason will inform your product roadmap to take a specific direction.

How do you combine qualitative and quantitative data coherently?

Begin by storyboarding: visually represent your user’s journey from before they get to the product, through their use of the product, and maybe even afterwards to how they dispose of it.

Basic example: someone goes into a bar. They hear some music. They love it but don’t know the name. They ask a friend who doesn’t know either. They ask someone else who does. That’s a storyboard for the simple app called Shazam.

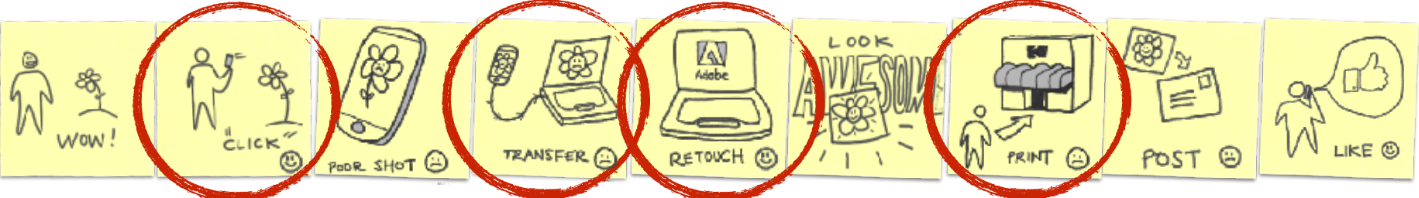

Now let’s build on that with a more complete example, based on Instagram because everyone’s familiar with it. Let’s break out our Post-It notes and Sharpies and try to map the user experience way back when there was no Instagram.

A user sees something awesome. Takes a photo of it. Transfers the photo to their computer. They’re a terrible photographer so they retouch it with Photoshop. Then they need to get it printed, mail it in an envelope, and finally their Mom calls to say, thank you for sending that lovely postcard, you’re an amazing photographer.

If you’ve done UX storyboarding, you know we also have to make sure every scene has an emotion: what’s the user feeling? For this, qualitative research will be valuable, because while I personally hate retouching photos, some people really love it.

From here, we’ll imagine our desired story. In Instagram, we first envision an acquisition experience, the user signing up for an account. For my ideal first onboarding experience, we still have someone taking a bad photo – it’s an unhappy moment, and we want them dissatisfied because if everyone takes amazing photos, who needs Instagram? So they give their photo to an editor and it magically becomes awesome. For the sharing, I want someone else seeing that photo and thinking, that’s awesome, I wish I was there, and somehow expressing that. And we want to notify the user so they can become famous and happy that so many people like their photos.

If your product is very early-stage, you should have just one main use case, meaning one specific situation in which your product could potentially be used. That’s the “minimum” part of your “minimum viable product.” If you have multiple use cases at the start, this is the time to simplify. In our example, we’ll assume everyone needs to fix their bad photos. As your product gets more complex, you might have multiple funnels – for example, a separate funnel for amazing photographers who will never need a filter.

Once you have your storyboard, how do you link quantitative data?

Your story helps you figure out what to instrument.

What can you record? Start with the number of downloads and accounts created. In an ideal world you could track the number of awesome things someone sees… how many pictures they take … how many of those pictures are terrible… the number of filters they apply… how many awesome photos came out of that… how many people say they’re jealous of those great photos.

Some of that may be impossible to track, but I at least want to think about whether there’s a way to track them. You probably can’t measure how many people are saying “oh, man, I wish I was there,” but you can measure likes, and that’ll serve as a correlated metric.

What we really want are metrics that go between each event. That’s our conversion funnel. Of the people who take a picture, what percentage of those pictures are bad? If 95% are fantastic, maybe there’s no big problem for us to solve. We don’t know exactly what’s a “bad” picture, but we can look at percentages of pictures that have a filter applied, and are actually saved. And the percentage of pictures that are shared might be a good proxy for how many pictures are awesome. The percentage of people who apply a filter and then actually share the photo might correlate with how good our filters are.

Those are actionable metrics. And note: they’re percentages, because raw numbers can be misleading.

Beyond these point-to-point metrics, we might also have aggregate metrics. For example: from the people who set up an account, what percentage get all the way to sharing a picture – our ideal onboarding experience? For retention: of those who actually shared something, what percentage returned within a week?

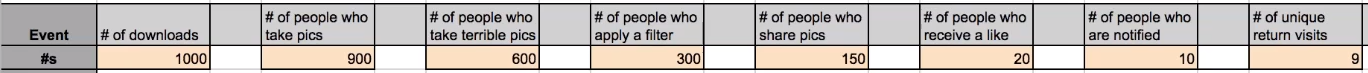

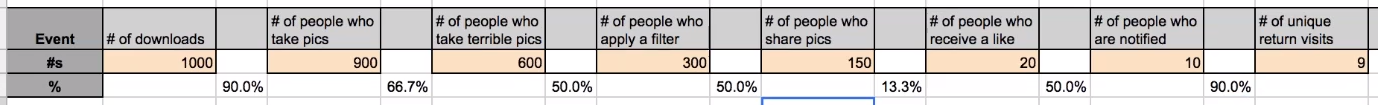

Now, based on the storyboard, I’ll build a spreadsheet to analyze our quantitative data.

We want this as simple as possible. I’ll first create a row of the actual events that were important enough to instrument, like the number of downloads, the number of people who take pictures, and so on. Below that, I enter the actual numbers I get back when I start building a product and throwing people at it. Let’s say I had 1,000 people who downloaded my app. I wound up with 900 people who took pictures, and on down.

Next, I want step-to-step conversion metrics. They’re very straightforward. I left skinny columns in here to enter them. Each is the percentage of people who continued from the previous step. For example, from Step 1 to 2, it’s the number of people who take pictures, divided by the number of app downloads.

Now I can start prioritizing, because I can see where my funnel is bad. The percentage of people who share pictures vs. those who receive a like is very low: just 13.3%. I’d want to know why. Do the photos stink? Is my user population too small?

I could try to impact this experience. Theoretically, for testing, I could manually like every photo and see if that got me more return visits. If I manually liked 50% of those photos, I should see that 50% of those people should be successfully notified because they’d turned on notifications, and 95% of those 50% should return. Will that actually happen?

Imagine we’ve now dealt with this 13.3% problem. Our funnel has three places that are still a little low, but they’re all pretty much equal. How can I prioritize?

First, what’s easiest? Would it be really simple to encourage people to activate their notifications earlier? If so, I might choose to experiment there. Second, what’s my sample size? I only have 20 people getting a like, and of these, only 10 are notified. But because the numbers are so low, the error margin is +/-25%. It’s a bad place to experiment because it’s hard to get a clean signal. I might run the same experiment tomorrow without changing my product and get a radically different result. (Google “sample size calculator” for tools to determine the sample size you need to get the margin of error you want.)

Third, I can ask: how much have I been experimenting on this? If I’ve already run 20 experiments and I’m not making any progress, maybe I should refocus elsewhere.

How does all this link to money and business cases?

You want to know: How much can I spend to acquire a customer who’ll actually be profitable, for B2B, or ecommerce, or whatever?

There’s some value associated with this person – the user’s Life Time Value (LTV) – whether it’s based on purchases, or subscription length, or ad revenue. We want to walk back from that value to say, how much can I afford to pay Google or Facebook to get them to my site?

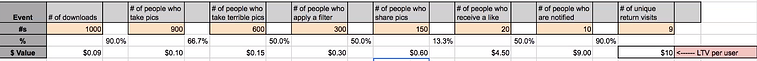

Just as an example, I’ll put in $10 as my customer’s life time value.

Now, I’ll work backwards. What’s it worth if someone’s notified of a like? 90% of the people who are notified will spend $10. Therefore, I can say $10 multiplied by conversion rate will tell me the value of those individuals. In my example, there are 9 people, so I’ll earn $90: ten dollars each.

If you’re doing this right, you should see the same value at every conversion stage. So I know if 20 people earn a like, they’re also worth $90: $4.50 each. And 150 people who share a picture on our site are worth 60 cents each. And I can keep walking backwards until I know the value of someone downloading my app.

That’s simple but it’s powerful. In my fictitious example, I can spend 9 cents to acquire a user (and break even). If I’m actually spending a dollar to get one download, I’m losing 91 cents every time that happens. If I’m Instagram, I might think: There’s no way I can buy users for 9 cents each. This will only work if I find a way to make my product go viral.

You can also see the financial impact of changes to individual metrics. So if you can bump the percentage of people who apply a filter up to 75%, the amount you can pay for a user immediately goes up to 14 cents.

This helps you prioritize. If you can significantly change a really low number, that’ll have a big impact. You can also ask, what’s the cost of the feature that improves this metric? Is it worth it?

Can you step back and get higher-level insights?

Sometimes, optimizing one step might negatively impact another step. In effect, you feel like you’re making progress when you aren’t. This is why it’s important to track how events change your overall numbers. Keep in mind these five key, high-level metrics when creating your decision-marking dashboard: Acquisition, Activation, Revenue, Retention, and Referral.

Our storyboard already aligns to some of those. My Acquisition metric is the number of downloads. My Activation metric spans a wide swath of this story: from downloading the app through taking a picture through getting notified of a like. So I can measure Activation by dividing the number of people who were notified by the number who downloaded something. And I’ve simplified Retention to the number of return visits divided by the number of people who’ve taken pictures.

In my example, my conversion rates are very low. To do something about that, I need to see what makes my users happy or sad. I can physically watch them apply the filter and figure out why more aren’t doing it. The quantitative methods told me what happened; now I can ask why.

That’s the final way you can use this model to prioritize. Between someone applying the filter and someone sharing a photo, I can ask: Is there anything obviously wrong that would be easy to fix? Is the Share button too small?

Can you summarize your takeaway lessons?

Absolutely:

- “KISS” – “Keep It Simple, Stupid.”

- Focus on metrics for Acquisition, Activation, Retention, Revenue, and Referral.

- Instrument numbers, but measure percentages: conversion rates are what matters.

- Use both quantitative and qualitative data to prioritize.

- Work backwards from customer life time value to discover what you can spend to acquire users.

- Please don’t forget the UX: it’s really critical!